[Updated Oct. 4, 2021, with more information on shooting and editing focus-adjustable video, as well as supported iPhones.]

[Updated Oct. 4, 2021, with more information on shooting and editing focus-adjustable video, as well as supported iPhones.]

At the Apple Event launching the new iPhone 13 family of phones, Apple made a big deal of their newest iPhone cameras. In addition to longer battery life, Super Retina XDR displays (which provide greater resolution and brighter images), Apple also added optical image stabilization, which will decrease blurry stills and shaky video.

The Pro versions of these phones can also record in ProRes; though the feature will not be available at launch. Clearly, Apple realizes that cameras are one of the most compelling reasons to upgrade to new phones.

For filmmakers two key highlights are recording images up to 4K 60 fps in Dolby Vision HDR; plus “Cinematic Mode.” Cinematic mode is supported on all iPhone 13 cameras.

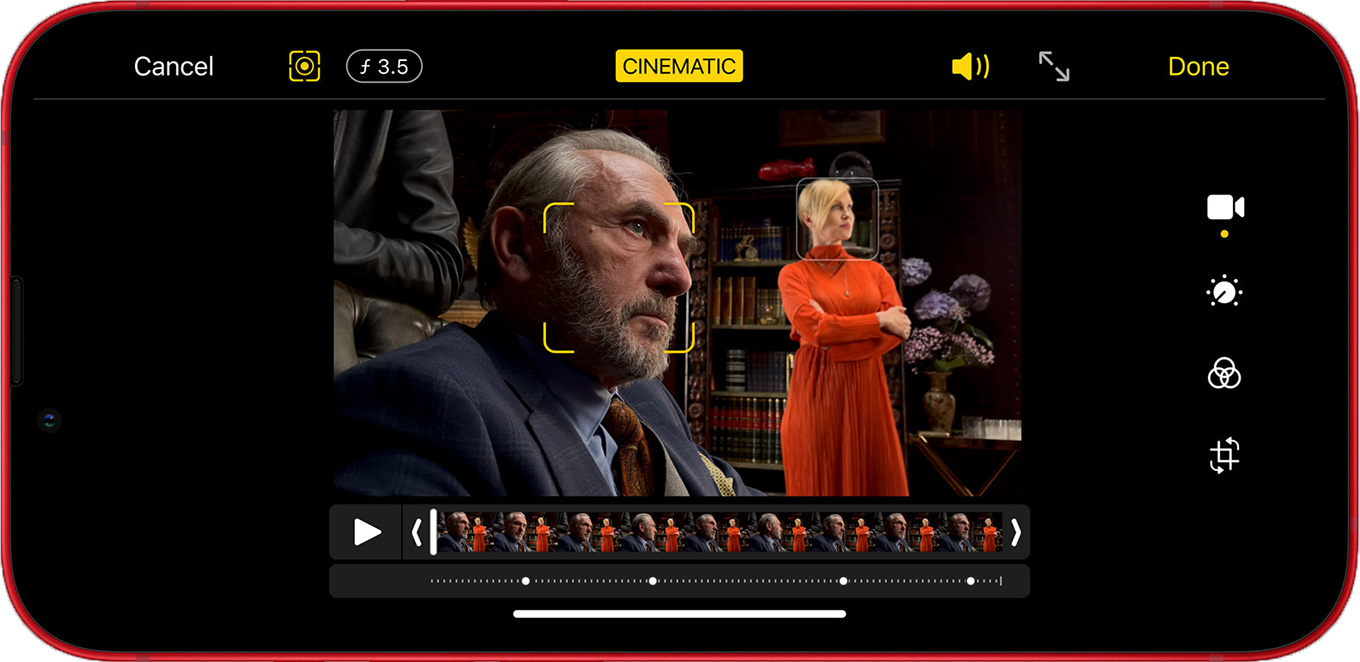

Cinematic Mode is the ability to automatically roll focus from one element in the frame to another. Peter Wiggins described it as “an automatic focus puller.” While rolling focus has long been a standard filmmaking technique to draw attention to specific portions of the frame, it has never existed in a smart phone, mainly because the image sensors were designed to make sure every part of the frame – from close-up to far away – was in focus.

But, what was NOT mentioned during the event was something extraordinary. Hidden deep inside Apple press release on these cameras was the following statement:

“The ability to edit the depth-of-field effect will be available in future updates to iMovie for macOS, Final Cut Pro, and requires macOS Monterey…. For creative control, the focus can be changed during and after capture, and users can also adjust the level of bokeh in the Photos app and iMovie for iOS, and coming soon to iMovie for macOS and Final Cut Pro.”

Whoa!!

Very soon, we will be able to REFOCUS video in post! Which is something I have long thought would be impossible. Not all video, only from an iPhone 13. But… once Apple shows it can be done – other high-end camera companies will be heavily pressured to find a way to follow suit. As will other NLEs.

As someone who almost lost his job due to an out-of-focus interview, this announcement is beyond stunning.

UPDATE – 10/4/21

Here’s a link to an Apple support article on how to shoot and edit Cinematic mode video.

As well, I contacted Apple to learn more; specifically asking if this new capability was using the lidar now included in the latest iPhone. Not surprisingly, they won’t discuss future products, saying they’ll “have more information on Cinematic mode video in FCP and iMovie for macOS when that feature ships.”

However, Apple reminded me that: “We have great new capabilities to edit Cinematic mode video in the latest version of iMovie for iOS / iPadOS (v 2.3.3). Namely the ability to add and delete focus points and modify the depth of field effect.”

Finally, while shooting Cinematic mode video requires any iPhone 13 (not just the Pro versions), editing Cinematic mode video can be done using:

macOS will support this feature in a future update.

SUMMARY

When will this happen? Naturally, Apple doesn’t say – except to state that it will require an iPhone 13 Pro, macOS Monterey, and Final Cut Pro or iMovie. But, looking to the future, both macOS Monterey and new laptops are coming soon. Could these signal the release of this new camera technology along with a significant update to Final Cut?

This has the potential to be a watershed moment for post-production: refocusing images after the fact.

16 Responses to Stunning News: Refocus iPhone 13 Video in Post!

Congratulations, Larry, on what seems to have been a busy move to Mass. and best wishes for the future. Had a wonderful time for a few weeks in Palmer once as part of an exchange scheme called Operation Friendship.

Pleased that you read the Apple Press release and guided me to the hope that the post focusing and iPhone 13 release with Pro Res. will be a catalyst for new features in FCP.

I have been trying out a tiny Sony ZV-1 which, although designed for blogging, I am very pleased with the quality, has a Product Mode pulling accurate focus very quickly automatically, again another company along with others bringing innovation with new products.

Regards

Alan N

Alan:

Thanks for the update. There are so many different new cameras, it is impossible to keep them all straight.

Larry

It’s very interesting, but I’m not sure it is quite so revolutionary. Tell me I’m wrong, but isn’t this the same as portrait mode for photos? There you can change the (apparent) depth of field, but not the focal point itself. The bokeh is all artificial, so you dial it up and down. That means you focus on point A and point B appears out of focus. You can then bring point B into focus but A stays in focus. You can’t shift focus from A to B. Do we think cinematic mode is doing something different and really enable proper pull focus?

Mark:

According to Apple, this feature is definitely rolling focus from one element in the frame to another – foreground to background, for example. So, yes, pulling focus in post, not just adjusting how out of focus the background is.

Larry

Fascinating technology indeed. Larry do you know if the rolling focus tech pre-supposes that key elements of the shot are already in focus in the original (with deep dof) and that the software will then be user-guided to drop certain portions of the picture out-of-focus at will? This seems quite do-able given existing tech. If however, an out-of-focus original shot can be made to be in focus, then that indeed verges on magic!

Chris:

GREAT question! My guess is that the entire frame is recorded in focus, then a selective blurring is applied to portions of the frame based upon settings we provide. I’m not sure that actual time-of-flight data is being recorded for each image.

For this reason, though I wish it would, it probably can’t focus a blurry shot.

Larry

Very, very interesting. Perhaps it’s based on the same sort of work that light field cameras such as the Lytro or Raytrix built. A quick Wikipedia search shows that this type of technology has been investigated since 1908! There’s a wonderful video produced by No Film School that explains how the Lytro Cinema camera worked.

https://www.youtube.com/watch?v=4qXE4sA-hLQ

Robert:

Thanks for the link. We’ll need to wait for more data from Apple before we really know what they are doing.

Larry

Seems the Light camera, which employed 16 lenses and logic chips could and did have the ability to re-focus. I tested one for a month, but the software was clunky and the background blurring annoying. Ultimately, I returned it for a full refund. Maybe Apple engineers got it right.

Ken:

We shall have to see.

My guess – and it is only a guess – is that time-of-flight data is not recorded. Rather, the entire image is recorded in-focus, with the ability to blur portions of the frame later, after recording. But, perhaps it is more sophisticated than this. We shall see.

Larry

Larry,

Congratulations on completing your move. I hope that soon all will be in order and you can get back to doing the things you most enjoy, and I am sure that moving is not one of them!

Having just purchased and started using an iPhone 13 Pro Max, I have been experimenting with Cinematic Video. As you know this footage can be edited right on the iPhone and one can set the focus in post (i.e. after saving the video ) on the iPhone. I have tried this and it works very nicely. This first attempt at Cinematic video on the iPhone 13 is not perfect, but it is not at all bad for the initial effort and is quite fun to experiment with and use. I look forward to the update to FCP that allows me to lock focus in FCP. The computational power of modern smart phones is truly something we could not have imagined a few years ago. Back in the 1950’s my father was an avid photographer, and I can remember him spending hours trying to get a good photo of spider lillies around our home. I often think how magical our current cameras and software would seem to him.

Tom

If your camera can shoot the entire scene in focus then your done, its just a matter or how you choose to process the image for creative reasons. It seems clear to me that “camera” manufacturers need to roll cameras with more and more computer tech built in. Computational photography. Also the traditional smaller chip semi pro camcorder space with a long depth of field will benefit with this tech.

Thanks for the article, Larry. This is so exciting! Rolling focus in iPhone 13, to eventually come to Final Cut perhaps?? – pretty cool. I feel like we’re on the cusp of a tech transformation to be able to pull focus in post at will in the future when and wherever the editor may want! However, if they’ve been investigating this since 1908, then it’s about time LOL!

I wonder if Apple engineers figured out how to incorporate its LiDAR scanner data into the Cinematic-mode image. Since Apple owns Pro-res format it is free to do this.

The LiDAR scanner does know time-of-flight (ToF). Apple experimented with the scanner on the iPhone 12 but the A14 processor had its limitations. Their original objective is to use it in conjunction with augmented reality (AR) to be able to insert virtual items behind closer real objects.

If this is the case, each image knows the distance (up to 5 meters) of the view’s elements and can probably develop layer properties allowing a truly roll-like focus, aka “smart bokeh”.

I suspect the LiDAR Scanner with the much faster A15 processor has enabled Apple to tie in ToF with camera imaging. It’ll probably be a crude adaptation in the 13 series but wait until it’s perfected in iPhone 14!

I wrote this before reading everyone’s comments, and it seems that many seem to agree with what I came up with. Does this below sound right?

Regarding the ability to edit depth of field, assuming you had a video or photograph where everything is in equal focus – (a long depth of field), Is it possible that the refocus feature in editing would just defocus the non selected area of a video or photograph?

This would give you control over shallow depth of field in post.

I don’t see how it is possible to bring an area that was shot as defocused into focus. Unless there were duplicate files being shot simultaneously. One in rolling focus and the other with a longer depth of field.

Jim:

This part is correct: “Regarding the ability to edit depth of field, assuming you had a video or photograph where everything is in equal focus – (a long depth of field), Is it possible that the refocus feature in editing would just defocus the non selected area of a video or photograph.”

Apple is coy on how they do this, but, in essence, they are doing a selection similar to selecting the subject in Photoshop. Once the iPhone defines the selected object (generally the foreground), it blurs everything else. Therefore, it only needs to shoot the entire image in focus, then applies blur afterward to everything it feels is not the selected subject.

They are not using Lidar or time of flight data.

Larry