Several times a week, I get requests to do a webinar on working with 4K media. This request always puzzles me because editing 4K media is no different than editing any other media. The process is exactly the same.

Several times a week, I get requests to do a webinar on working with 4K media. This request always puzzles me because editing 4K media is no different than editing any other media. The process is exactly the same.

NOTE: For the purpose of this article, I’m lumping all high-resolution media – 4K, 5K 6K, 8K – into the single term “4K.”

But, in thinking about this further, while editing 4K media is the same as editing HD, the workflow surrounding 4K is not the same. The differences are:

So, let’s take a look at each of these.

COMPUTER SPEED

Most computer systems shipped in the last four years are able to easily edit 4K media. The challenges are not in the editing, but instead with:

Multicam 4K editing requires a fast processor and editing using proxies (see below). Even beefy systems can have problems handling 4K multicam files, especially if your storage is slow or you are shooting lots of cameras. Editing using proxy media and storing them on an SSD drive solves many of these issues.

Render and export speeds are GPU dependent for both Premiere and Final Cut. Older systems with slower GPUs will struggle with these larger files. While we can use proxy files for both multicam and general editing, exporting master files requires using the 4K master files, which means that slower systems will tend to choke due to GPUs that can’t handle the task.

STORAGE

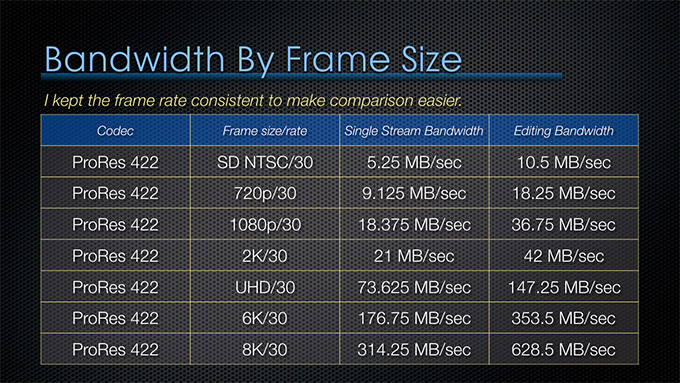

Just because your storage system can easily handle HD media does not mean that it is ready for serious 4K editing. Let me illustrate

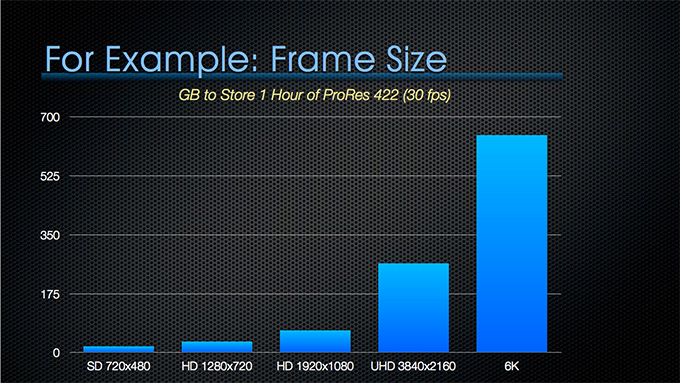

As frame size increases, the size of your files in terms of GB escalate dramatically. A 4K file is 4 TIMES bigger than the same duration file in 1080 HD.

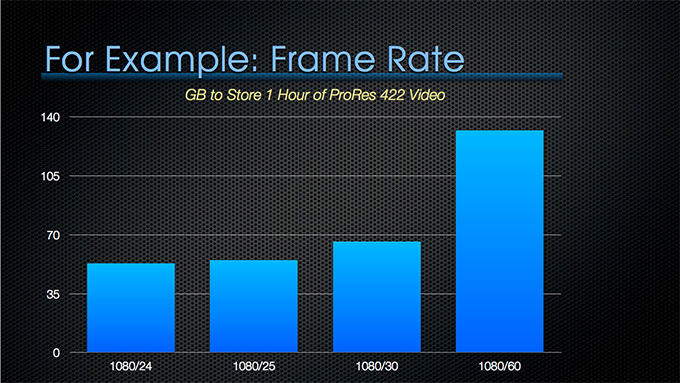

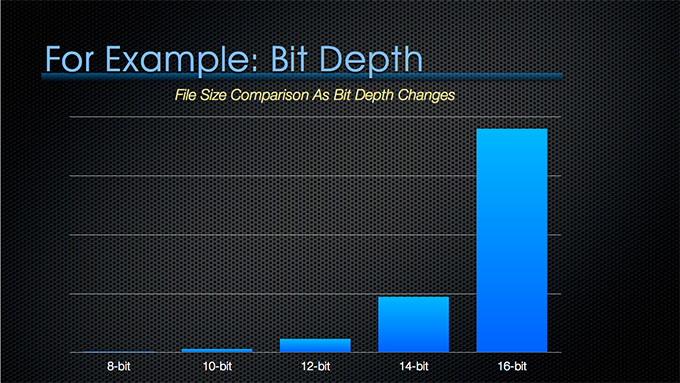

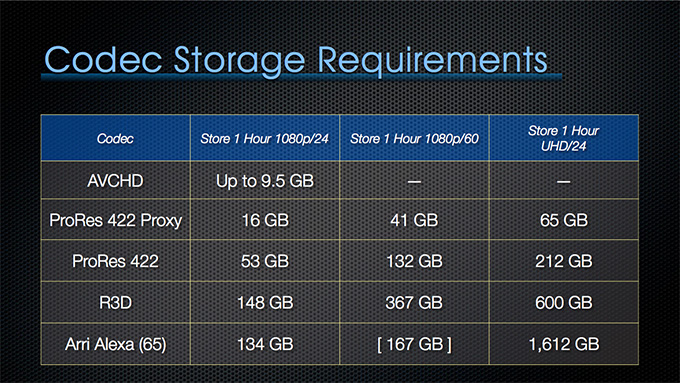

NOTE: These stills are all taken from my recent webinar: “Maximize Your Storage.”

As frame rates increase, the size of your media also increases.

And as bit-depth increases, the size of your media also increases – dramatically!

When you combine all of these, your 4K files explode into massive file sizes.

Additionally, the speed (bandwidth) of your storage may be adequate for HD editing, but insufficient for 4K, depending upon how your storage is connected, whether it uses SSDs or spinning media and how many drives are attached to your system.

Editing that is trivial at HD sizes, totally maxes out a single hard drive when editing 4K images. (Most NLEs require a bandwidth equal to 2x the bandwidth of the format of project clips when you are editing.)

So, the first thing you need to look at when moving to higher resolutions is whether your storage is both big and fast enough.

NOTE: I have an entire webinar devoted to explaining everything you need to know to make the most of your storage, whether you are editing Avid, Adobe, or Apple. Click here to learn more.

PROXY vs. CAMERA NATIVE vs. OPTIMIZED

Both Premiere and Final Cut support proxy editing. This allows you to create lower-resolution, lower-bandwidth files for editing, then quickly replace them with camera native or master files prior to final export.

Here’s a video that explains the new proxy workflow in Adobe Premiere Pro CC.

And here’s an article that explains how and when to choose to use native, optimized, or proxy media in Apple Final Cut Pro X.

REFRAMING SHOTS

One advantage to shooting higher resolutions is the ability to reframe a shot when you edit that image into a lower-resolution project. (For example, shooting 4K to edit into an HD project.)

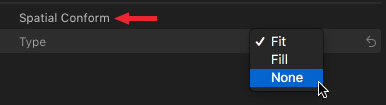

For example, by default, FCP X always scales any imported image – regardless of the resolution of the source image – so that it fills the frame. You can change this using Spatial Conform:

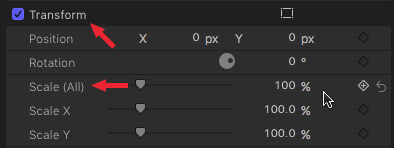

Changing Spatial Conform to None allows rescaling in the Transform menu, with the highest possible image quality as long as you don’t scale the image larger than 100%.

Premiere, on the other hand, defaults to displaying the imported image at 100% size, regardless of how that fits into the sequence.

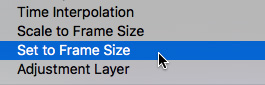

You can change this behavior on a clip-by-clip basis by right-clicking the clip and selected “Set to Frame Size.”

NOTE: Set to Frame Size provides higher quality during rescaling than Scale to Frame Size.

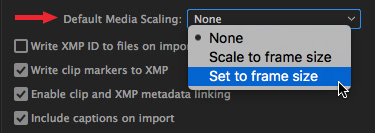

Even better, you can change the default behavior through a brand-new preference setting in the Media section: Default Media Scaling.

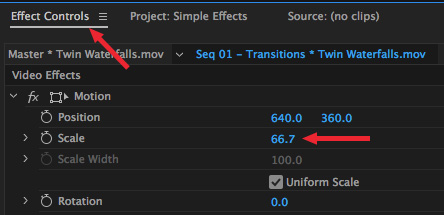

Then, in either case, adjust the size of the image in your sequence in the Motion section of Effect Controls. Remember, just as with Final Cut, you retain the highest image quality when you keep all scaling below 100%

HDR SUPPORT

The UHD standard requires, among other things, 4K images with a minimum of 10-bit depth. This means that we need to shoot our original media to meet this spec.

Shooting with an 8-bit codec – such as AVCHD, or many versions of MPEG-4 – then upconverting later, will not yield the same results as shooting 10-bit or greater originally for the same reason as shooting SD then upconverting to HD is not the same as shooting HD originally.

Most RAW and Log files are 10-bit or greater. The highest bit-depth video currently available is 16-bit. 8-bit files yield 256 discrete gray scale or color saturation levels. These are the values we have used since the advent of digital video in standard-def. 10-bit files allow up to 1,024 gray scale levels. (And, just for reference, 16-bit files support up to 65,536 values.)

The greater the bit-depth, the bigger the files and, since bit depth is a logarithmic value, file sizes get really big really fast.

EXPORT

Exporting 4K files is no different from exporting any other file, except that these master files are a lot bigger than we are used to and that render and export benefits from a fast GPU for image processing.

SUMMARY

Editing 4K images really isn’t about the editing, it’s about media management and making sure your storage can properly store and play these massively large files.

2,000 Video Training Titles

Edit smarter with Larry Jordan. Available in our store.

Access over 2,000 on-demand video editing courses. Become a member of our Video Training Library today!

Subscribe to Larry's FREE weekly newsletter and

save 10%

on your first purchase.