A question I am asked frequently is: “Why is it bad to scale video, or still images, larger than 100%?”

First, regardless of what video editing software you use, all video images are bitmapped. This means that an image is composed of small rectangles of color – called pixels – organized into rows and columns. (Most video formats use square pixels, but some, like DV and HDV, do not.)

By definition, a pixel can only contain one color and this rule is never violated.

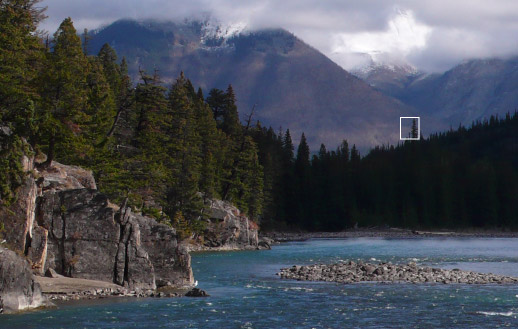

For example, see the white rectangle in the image above. Let’s zoom into that so that we can see the individual pixels that make up the image of the tree poking above the rest of the forest.

Notice how all these pixels are organized into perfect rows and columns? Also, notice that each pixel has only one color. However, there are so many of them that they can create almost any shape and texture when you zoom back to see the entire picture.

However, not only are these pixels fixed in terms of color, rows, and columns, they are also fixed in size.

When you zoom using the lens of your camera, you are not changing the size of the pixels, you are changing the size of the image passed from the lens to the sensor in the camera. Once a pixel is recorded into a video frame, that pixel is locked in size, shape, and position.

NOTE: In spite of the best efforts of Harry Potter to convince us otherwise, the moving images that we see in film and video are actually a rapidly changing series of still images, each of which is composed of rows and columns of pixels.

Now that we’ve established that pixels are fixed in size, shape and position, it is easy to understand why enlarging a video image is not a good idea – all we are doing is taking the existing pixels and making them fatter.

Here, for instance, we are zooming closer to the top of the tree. Notice how the pixels are bigger, and the shape of the tree is much less distinct. Fatter pixels equal a blurrier image. Scaling an image larger than 100% stretches the existing pixels to expand to fill a larger space.

In general, you’ll notice a drop in quality once you scale an image more than about 104%. Your audience will notice the drop in quality once the image gets larger than about 110%.

Well, yes and no. There are four “magic” video image sizes:

And that’s it. Yes, there are larger image sizes – currently lumped into the category of “digital intermediates,” but they are used for projects designed for theatrical projection, not broadcast TV or web distribution.

This means that at the instant your camera records an image, the pixels are locked into one of these four sizes, depending upon how you configured your camera, and from that point on, the pixel size and dimensions can’t be changed without affecting quality.

Yes. In fact, enlarging interlaced footage looks worse than enlarging progressive images by the same amount.

For this reason, I always encourage filmmakers to shoot 1080p or 720p. A distant third choice is 1080i. It is far easier – and provides higher quality – to convert a progressive image to interlaced, than to convert an interlaced image to progressive.

No, because when you scale images smaller, you are squeezing more pixels into smaller space. This is like squeezing water from a sponge. Even after you squeeze the water out, the sponge is still wet – you have plenty of pixels (um, water) to make the image smaller.

With video, you need to shoot a larger image size than your project. For example, you could shoot a 1080p image and edit it into a 720p project. (Though, truthfully, it is often easier to just shoot the moves you need during production.)

For stills, the accepted practice is to import stills which have the same aspect ratio of your project, but include more pixels. For my projects, I create stills that are 2.5 times bigger than my video format.

For instance, the videos that I create for the web are always 1280 x 720. If I need stills that don’t need moves, I create them at the same size as my video project: 1280 x 720. If I need stills for moves or zooms, I create the stills 2.5 times larger than the project. For a 720p project, I create stills at 3200 x 1800. This allows me to zoom in, zoom out, or pan without scaling the still larger than 100%.

When compared to still images, video is very low resolution. You don’t need to import gigantic images; in fact, your projects will look better if your stills are roughly the same size as your video format.

Here’s an article I wrote a while ago that goes into this subject in more detail.

Here’s the key point: If you want your images to look as good as possible, make sure you never scale them larger than 100%, regardless of what video editing software you use.

2,000 Video Training Titles

Edit smarter with Larry Jordan. Available in our store.

Access over 2,000 on-demand video editing courses. Become a member of our Video Training Library today!

Subscribe to Larry's FREE weekly newsletter and

save 10%

on your first purchase.

14 Responses to FAQ: Problems with Scaling Video

He’s a question I have always had. Can’t you just put the clip into Motion or AE and use the Camera at a higher focal length. That way your not scaling but your using the camera at 180mm–thereby zoom in to the picture (and not scaling)?

Does it work like that or does AE and Motion just scale it up also?

Always wanted to know.

Thanks.

It doesn’t work that way.

Both Motion and AE are scaling bitmaps, as my article explains. They are just wrapping this scaling in different terms and a different interface. Same results.

Larry

Great article, and thanks for all your help, Larry!

Quick question: when I’m scaling 1080 footage to fit (perfectly) inside a 720 project, is there a ‘magic percentage number” you use to scale? For instance, sometimes 67% looks to fit perfect inside frame, but other times I think I’ve found FCP auto “rounding” the number down to a 66.67%… or all the same in final export? Thanks

Ben:

Interesting question….

My guess, because I don’t know, is that 66.67% would yield slightly better results than 67%. However, as with all things, do a test using your footage. If you can’t see a difference, then there isn’t a difference.

Larry

Have you ever attempted (or considered) scaling 1080 footage in a 1080 project, knowing you’ll output to 720?

Um, I don’t understand thee question. There’s no need to scale 1080 footage in a 1080 project. It edits in normally.

Larry

Sorry, I should have explained better, to scale your 1080 footage larger (say 110-150% larger) for tighter framing / better composition.

Andrew:

Ah. You would be much better served to edit your sequence at 720 from the start. That way, you are always keeping your image quality as high as possible. Scaling pictures smaller does not cause quality issues. Scaling images larger does.

larry

This is really fantastic information! Can you please answer this question for me? If you shoot a video at 1080 p and edit it down or render the final video at 720 p, is that considered scaling it down as well or is the codec being recompressed in some way? I am not clear what the video editor is doing when it renders out a video at a lower quality than it was shot. Is quality lost in any way? I know some people take UHD footage, 3840 X 2160, and render it out at 1920 x 1080, and say that footage has more detail than a video rendered straight out as shot at 1920 x 1080. Is that true? If it is, then it seems down scaling would increase quality, no?? Please help me to understand. Thank you!!!

Kevin:

The images are being scaled, which means you are throwing away pixels that are not needed for the smaller sized image. There is a lot of debate on whether scaling improves an image quality. The best answer is “that depends.” If focus is soft, or there are problems with the image, scaling will often improve the results. Generally, though, shooting higher-resolution media then scaling is done so that directors don’t need to figure out framing on set, but, rather, can decide exactly the size shot they want in post.

There’s nothing “wrong” with this approach, except that scaling an image is not the same as changing the camera position or the camera angle, and scaling never changes the depth of field. For some people, that’s no problem, for others, it is.

Larry

Dear Larry!

We’ve got some troubles in Premiere CC. Unfortunately we have to produce in 1080i. When scaling certain Footage (in this example it was from a Sony PJ780), there are bad artifacts in the result. Do you know, why this happenes? When zooming in to FS7 or FS5 there are no troubles.

Here’s a picture link: https://i.imgsafe.org/a5bce61353.jpg

Thanks and best regards,

Clemens

Clemens:

You are seeing interlacing – the thin horizontal lines radiating off moving objects. I suspect the FS7 or FS 5 are shooting a progressive image which is being converted to interlaced after the image is captured, which avoids these lines.

The only option is to convert the PJ780 footage to progressive before editing to try to minimize this problem.

Larry

Why does it look so much better (not good, mind you) to open a 320 x 240 video in Quicktime then scale the playback window up by 300% or so than to take the same video clip and scale it up using Compressor?

Steve:

I don’t know, unless QuickTime is applying smoothing to the pixels.

Larry