I enjoy looking at new storage technology, measuring its speed and trying to figure out where this new gear fits in a media workflow.

I enjoy looking at new storage technology, measuring its speed and trying to figure out where this new gear fits in a media workflow.

Recently, as I was writing about upgrading my network, I discovered something that is making me change my process when measuring storage speed. (Read about it here.)

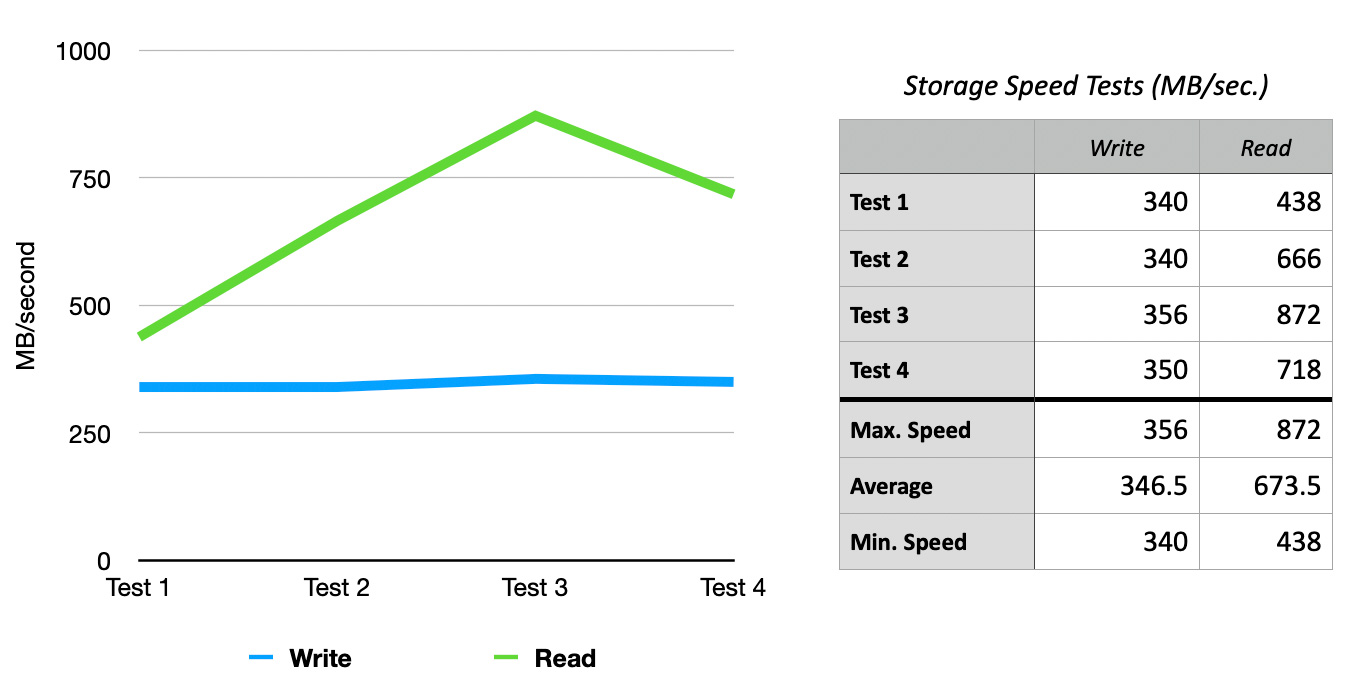

Here’s an example. The same storage hardware, the same test run four times each about a minute apart. Write speeds are within 5% of each other. But read speeds varied by up to 100%!

In most cases, the first test is the slowest. Then, as the storage system gets warmed up, or disk caches become enabled, performance – especially read performance – sky-rockets.

I’ve noticed this on a number of performance tests, but this example is a good illustration; and I remembered to retain my test numbers. The purpose of my mentioning it here is that, while I knew test results varied, I did not realize they varied by this much.

Going forward, when I test for performance, I plan to do at least five tests, then average them to get a better representation of performance. In the past, I would only do two or three. Also, I probably won’t record the first test, because it does not seem to be representative of what the gear can do in real-life.

I found these results interesting and wanted to share them with you to use for your own tests.

2,000 Video Training Titles

Edit smarter with Larry Jordan. Available in our store.

Access over 2,000 on-demand video editing courses. Become a member of our Video Training Library today!

Subscribe to Larry's FREE weekly newsletter and

save 10%

on your first purchase.

2 Responses to A Caution on Measuring Storage Speed

Hello Larry

just a remark from a QA quy.:

you should consider running different test typs in you sequence to somewhat reset the system. Warming up makes the final result quite vulnerable to all kinds of lurking variables.

If you plan to run these different types as you did with your comparisons between the compressors, create a randomized sequence between these. Your 5 replicates will be much more meaningful.

Best Olaf

Olaf:

In other words, run a mix of tests, not just the same one multiple times. Good suggestion, thanks!

Larry